FedML Closes $11.5Million Seed Round to Help Companies Build and Train Custom Generative AI Models

SUNNYVALE, Calif.--(BUSINESS WIRE)--FedML today announced that it closed $11.5 million in seed funding to expand development and adoption for its distributed MLOps platform, which helps companies efficiently train and serve custom generative AI and large language models using proprietary data, while reducing costs through decentralized GPU cloud resources shared by the community.

@FedML_AI announced $11.5 million in seed funding to help companies efficiently train and serve custom generative AI and large language models using proprietary data #artificialintelligence #machinelearning #mlops

The oversubscribed funding round was fueled by growing investor interest and market demand for large language models, popularized by OpenAI, Microsoft, Meta, Google and others. Many businesses are eager to train or fine-tune custom AI models on company-specific and/or industry data, so they can use AI to address a range of business needs – from customer service and business automation to content creation, software development, product design, etc.

Unfortunately, custom AI models are prohibitively expensive to build and maintain due to high data, cloud infrastructure and engineering costs. Those costs are growing due to the huge demand for GPU resources, leading to shortages worldwide. Moreover, the proprietary data for training custom AI models is often sensitive, regulated and/or siloed.

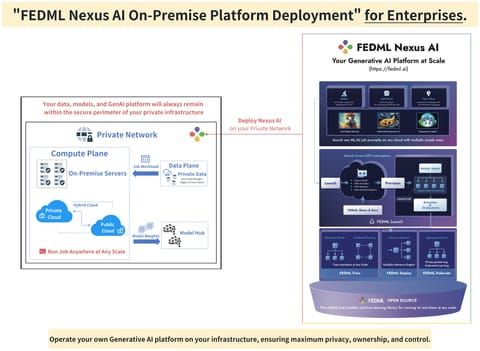

FedML overcomes these barriers through a “distributed AI” ecosystem that empowers companies and developers to work together on machine learning tasks by sharing data, models and compute resources – fueling waves of AI innovation beyond large technology companies. Unlike traditional cloud-based AI training, FedML empowers distributed machine learning via both edge and cloud resources, through innovations at three AI infrastructure layers:

- A powerful MLOps platform that simplifies training, serving, and monitoring generative AI models and LLMs in large-scale device clusters including GPUs, smartphones, or edge servers;

- A distributed and federated training/serving library for models in any distributed settings, making foundation model training/serving cheaper and faster, as well as leveraging federated learning to train models across data silos; and

- A decentralized GPU cloud to reduce the training/serving cost and save time on complex infrastructure setup and management via a simple “fedml launch job” command.

“We believe every enterprise can and should build their own custom AI models, not simply deploy generic LLMs from the big players,” said Salman Avestimehr, co-founder and CEO of FedML, and inaugural director of the USC + Amazon Center on Secure & Trusted Machine Learning. “Large-scale AI is unlocking new possibilities and driving innovation across industries, from language and vision to robotics and reasoning. At the same time, businesses have serious and legitimate concerns about data privacy, intellectual property and development costs. All of these point to the need for custom AI models as the best path forward.”

FedML recently introduced FedLLM, a customized training pipeline for building domain-specific large language models on proprietary data. FedLLM is compatible with popular LLM libraries such as HuggingFace and DeepSpeed, and is designed to improve efficiency, security and privacy of custom AI development. To get started, developers only need to add a few lines of source code to their applications. The FedML platform then manages the complex steps to train, serve and monitor the custom LLM models.

“Distributed machine learning will play a more important role in the era of large models,” said Chaoyang He, co-founder and CTO of FedML, who created the FedML library during his PhD study. “FedLLM is only our first step, allowing large models to see larger-scale data, so developers can exert its power on a huge number of parameters. We will upgrade FedLLM into a generic LLMOps over time, based on our innovations in MLOps, distributed ML frameworks, and decentralized computing power.”

Since launching in March 2022, FedML has quickly become a leader in community-driven AI, hosting the top-ranked open source library for federated machine learning, surpassing Google’s TensorFlow Federated in November 2022. FedML’s platform is now used by more than 3,000 users globally, performing more than 8,500 training jobs across more than 10,000 edge devices. FedML has also secured more than 10 enterprise contracts spanning healthcare, retail, financial services, smart home/city, mobility and more.

The $11.5 million seed round includes $4.3 million in a first tranche previously disclosed in March, plus $7.2 million in a second tranche that closed earlier this month. The seed round was led by Camford Capital, along with additional investors Road Capital, Finality Capital Partners, PrimeSet, AimTop Ventures, Sparkle Ventures, Robot Ventures, Wisemont Capital, LDV Partners, Modular Capital and University of Southern California (USC). FedML previously raised nearly $2 million in pre-seed funding.

“FedML has a compelling vision and unique technology to enable open, collaborative AI at scale,” said Ali Farahanchi, partner at Camford Capital. “Their leadership team combines humility, hard work and perseverance with deep technical capabilities, and they’ve already made strong progress. In a world where every company needs to harness AI, we believe FedML will power both company and community innovation that democratizes AI adoption.”

“We are excited by the opportunity that FedML is pursuing, led by Dr. Avestimehr and Dr. He,” said Thomas Bailey, founding general partner of Road Capital. “With industries rushing to develop AI applications, FedML’s design can hopefully bridge AI and the decentralized web and unlock a rich marketplace for machine learning data, resources, and know-how.”

“FedML’s decentralized machine learning solution promises to create a large economy and marketplace for machine learning resources (data, compute, and open-source models), especially as the generative AI revolution is unfolding rapidly,” said Adam Winnick, General Managing Partner at Finality Capital Partners. “We invested with Salman and his team because we believe their existing decentralized compute network and deep reservoir of intellectual capital can deliver on that promise now.”

FedML was co-founded by Avestimehr, a Dean’s professor at USC and the inaugural director of the USC + Amazon Center on Secure & Trusted Machine Learning, and his former PhD student Dr. Chaoyang He, who published several award-winning papers and has more than 10 years R&D experience at Google, Amazon, Facebook, Tencent and Baidu. Over the past four years, Avestimehr and He have worked with nearly 40 collaborators to build FedML’s open source library and commercial software that combines federated learning tools with an industrial-grade MLOps platform and secure data marketplace.

About FedML

FedML is a leader in custom AI development, using distributed AI and federated learning to help companies build and train their own AI models. FedML’s enterprise software platform and open-source library empower developers to train, deploy and customize models across edge and cloud nodes at any scale. FedML’s distributed MLOps platform uniquely enables sharing of data, models, and compute resources in a way that preserves data privacy and security. The company hosts the top-ranked GitHub library for federated learning, and is used by more than 3,000 developers globally and 10 enterprise customers spanning multiple industries.